It’s easy to default to playtest methods that feel ‘convenient’. In our survey of playtesting habits, we heard that surveys are the most used method. Although surveys feel easy to run, they are often not the most appropriate for many of the questions teams need to answer – such as ‘what are the biggest friction points in my game’.

Don’t unthinkingly pick your favourite methods. Failing to validate the method choice can lead to underwhelming playtests where the data collected is vague, or not providing a helpful steer internally.

This means results that are easy to ignore, and playtesting falling down the priority list – increasing the risk of major development pivots, or failed releases.

In this post, I introduce a reliable method for picking the right playtest method. This will ensure you generate relevant and useful playtest data.

A process for picking your playtest method

Game development often lacks a standard terminology – and the word ‘playtest’ has different meanings for different teams. For some, it means ‘any study, including QA testing’. For others, it describes a specific quantitative method to get a lot of feedback from people via lab-based surveys. Today we’re using it generically, to cover any activity that involves using external players to inspire or evaluate game development.

And the process we’ll go through looks like this:

Start with: what do you want to learn?

Before you can make sensible decisions about ‘what method to use’, you need to be clear on what you want to learn. The more concrete the better!

Gathering these objectives is typically a communal effort, speaking to colleagues and other leads about their current priorities, but should end with a short-list of some goals from a playtest (referred to as ‘research objectives’ – what are we trying to learn).

Some example objectives include:

- Where do players get stuck in this level?

- Why do players abandon our game in the first week?

- Do players understand how to upgrade their character?

- Which game mode do players interact with the most?

- Can players defeat this boss?

Your specific objectives should reflect the team’s current priorities, or upcoming decisions. Failing to get the team aligned behind the objectives now is only going to cause problems later (such as your study being ignored)!

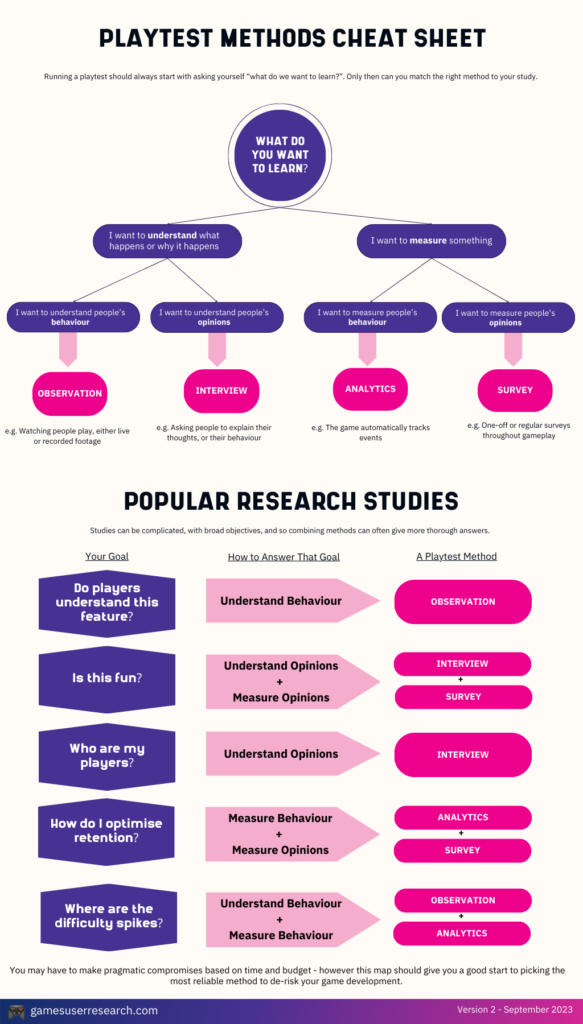

Categorising your objectives

These objectives, the questions the teams have, have different categories. Identifying the category will allow you to pick the right research method to answer them. Here are the questions to ask yourself.

Is this a measurement or an understanding question?

Some questions require measurement to answer. Things that can be measured include objective things that happened (such as ‘how many times players failed’), or scores (such as ‘rate the difficulty of this level’).

Measurement questions offer a chance to show an objective representation of the state of the game, and identify perceived difficulty spikes, or moments of boredom. It’s a great way of identifying where problems are, and allows benchmarking to happen – such as comparing the scores between two levels or two games.

Understanding questions allow you to explain why problems happen – such as watching players who are stuck to understand what is preventing them from progressing, or asking frustrated players why they are bored. It’s the qualitative part that gives teams the detail required to fix problems.

For each of your research objectives, categorise them by ‘measurement’ or ‘understanding’. Sometimes a research objective will cover both – such as “what abilities do players use most, and why?” – this is best broken down into two separate objectives for clear categorisation.

Is this a behavioural or opinion question?

For both measurement and understanding questions, there is a second layer of categorization that needs to occur.

Some questions are best answered by understanding player behaviour – what are players actually doing in the game. This is the safest kind of data to capture, because it’s objectively true (players definitely did the thing), and can be a helpful indicator of future behaviour (why players abandoned other game is likely to be a reason they’d quit your game). A lot of the most valuable user research work can be done by focusing on player’s behaviour, and it can answer impactful questions such as why did players fail this level or do players know how to upgrade?

Other questions are framed around player’s opinions – what do they think? These more subjective questions are harder to reliably answer (because we ultimately want to change behaviour, and opinions are a worse predictor of future behaviour than understanding past behaviour). However they are still a common research objective that teams want to explore – such as what do players think about the main character?.

Overall, care needs to be taken with opinion questions to avoid drawing misleading conclusions, and developers will need to be very careful with how they treat this data. Most usefully, understanding a player’s stated opinions can also unlock a deeper understanding of their behaviour, because it gives an insight into what’s happening inside their head – explaining why players made the decisions they did. However they can also mislead teams who take the stated opinions at face value. Be careful!

For each of your objectives, decide whether it is best answered by capturing player’s behaviour or their opinion.

🚀 Action: For each of your objectives, follow the flow chart to identify the type of data required.

Next: pick the best method

Largely, methods fall into four buckets – observation, interviews, analytics and surveys. There are nuances and a variety of sub-methods within each of these buckets, but let’s cover each briefly.

Observation

Watching people play your game is an incredibly impactful research method.

The strength of observation is that it provides an unfiltered view of ‘what players are doing in your game’, and their true behaviour – really valuable information for inspiring iteration of your ideas and implementation.

Observation can be achieved through running live-sessions, either in person or streamed over the internet, or as an unmoderated study – where players pre-record their gameplay, and you watch the video back alter.

One downside of observation is ‘interpretation’. You will have seen what players do, and have some context about why they did it based on what you’ve seen in their gameplay – but without asking them questions directly, some motivation may be ambiguous and unclear.

A second downside of observation is ‘it takes a long time to do’ – you can only watch one player at a time. That means for measurement questions, it’ll take a long time to scale.

Interviews

Interviews – asking players questions, allows us to understand what’s happening in player’s heads. By asking probing questions to go deeper, game makers can move beyond shallow responses to understand player’s thoughts intimately (and with enough detail to take action). Because you are talking to the player live, you also don’t need to finalise in advance exactly what questions you will ask or how you will ask them, allowing you to react to the topics and issues that players raise.

Their responses will require interpretation – players can only represent their own worldview – but understanding that worldview will allow you to understand observed behaviour (such as why players went the wrong way, or why they abandoned the game). Again – very valuable information for taking decisions to fix emergent issues.

Interviews are best done in the moment – live with players either during their play session, or immediately after, and can be done remotely via video software if needed.

The downsides of interviews are that it can only tell you opinions and worldviews – players won’t be able to accurately represent their in-game behaviour, and will attempt to apply post-hoc logic to explain their behaviour that might not have been true (as we all do when talking about ourselves!). And once again, scale can be a challenge – it takes time to interview players one to one.

Analytics

Most games will look to integrate some sort of analytics or telemetry, to capture data about player behaviour automatically. Let ‘what do we want to learn about player behaviour’ inspire your decisions about what data and events to capture.

As a quantitive method – analytics strength is scale. You can track thousands of player’s behaviour to identify where most players fail, or if one character or weapon is overpowered.

However analytics struggle with explaining that behaviour – you will identify that players are failing, but not be able to reliably answer ‘why’ without correlation using other methods – essential information for trying to fix the issues that emerge.

Surveys

Many studies use surveys to track player’s opinions – for example asking for ratings after every level or encounter, about their overall experience, the difficulty, the pacing, or other opinion questions.

Survey’s strengths are in benchmarking. You can ask players to rate what they have just done, gather a lot of responses, and then compare ratings between levels, characters, or games to compare one game with another at a statistically significant confidence level.

Like other quantitative methods, surveys struggle to explain ‘why’ those ratings occured. Even when free-text questions are used, the responses are often too shallow to provide direction (compared to dedicated qualitative methods).

There’s a slightly longer introduction to games user research methods in this free extract from the book How To Be A Games User Researcher.

🚀 Action: Pick the right method for your study using the flowchart.

Combine methods to draw strong conclusions

One of the first things you learn when starting to run playtests is that there is no one perfect method. The ‘complete truth’ is multi-faceted, beyond what you can tell just by watching people, or just by measuring behaviour alone.

This can be seen in the methods described above – each has its own strengths and weaknesses.

Playtests often combine methods to overcome the weakness of each. For example you might watch a player complete a section, then give them a survey and ask them some interview questions. This allows you to draw complete conclusions that cover not just “what happened” but also “why it happened”, and have enough detail to make appropriate design decisions.

My free guide Playtest Plus gives clear guidance on what playtests to run, and which methods to employ throughout development – get it at the end of this article.

Pragmatic Playtesting

When time is short, it might be necessary not to use the ‘perfect’ method. Compromises will need to be made. For example watching a video of someone playing, when you’d rather be watching them live, or prioritising a lab-based study over a more appropriate quantitative study when worried about leaks.

This is particularly pressing for longer retention-based studies – a ‘perfect’ set-up of watching multiple players play through long campaigns quickly becomes unfeasible, generating weeks of video footage. This might push teams to less appropriate research methods, such as surveys.

That’s okay, we have to be pragmatic with our playtests – breaking the rules (carefully) is fine once you understand them, and can take some steps to mitigate the risks!

What is the best playtest method?

I’ve described how the ‘research objective’ should guide your decision about which method is appropriate. If you’re lost though, I think the easiest method to get right is watching someone play, and occasionally asking them questions. This gives you the most direct access to real player behaviour and allows you to interpret why it happened – revealing a lot of rich data and inspiration.

Integrate player insight throughout development

Every month, get sent the latest article on how to plan and run efficient high quality playtests to de-risk game development. And get Steve Bromley’s free course today on how to get your first 100 playtesters for teams without much budget or time.

Plus your free early-access copy of ‘Playtest Plus’ – the essential guide to the most impactful playtests to run throughout development of your game